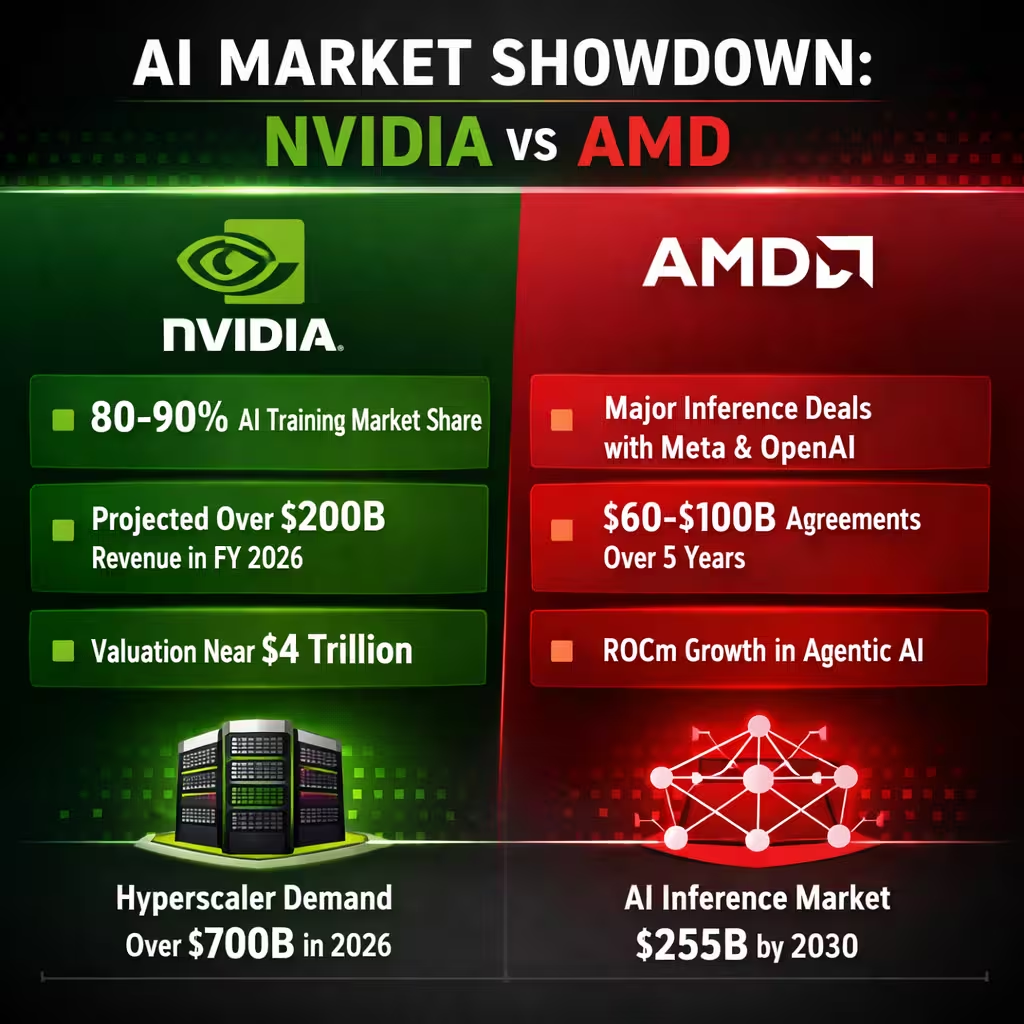

Nvidia dominates AI infrastructure with its GPUs and CUDA platform driving revenue growth to $216 billion in fiscal 2026 and achieving roughly a $4.3 trillion market cap while AMD secures major inference deals with OpenAI and Meta. This AI boom creates a sharp pain point for enterprises locked into one supplier as data center costs skyrocket and supply chains strain under hyperscaler demand exceeding $700 billion in 2026 alone. Yet the solutions lie in smart diversification that unlocks efficiency gains and fresh revenue streams for forward-thinking leaders.

The global AI accelerator market is set to surpass $200 billion by the end of 2026 with Nvidia still commanding 80 to 90% share in training workloads. AMD meanwhile carves out rapid gains in inference and agentic AI through its ROCm software stack now powering custom Instinct MI450 GPUs. At L-Impact Solutions we see this split as proof the supercycle is big enough for both giants yet Nvidia’s premium valuation leaves room for AMD to deliver outsized returns.

Recent data shows Nvidia’s data center segment alone hit $193.7 billion for the full year fueled by Blackwell and Rubin platforms. Groq’s LPUs integrated via Nvidia’s $20 billion licensing deal now handle low-latency inference decode phases delivering up to 35 times higher throughput per megawatt in agentic setups. AMD’s parallel momentum includes a six-gigawatt Meta agreement valued at $60 billion to $100 billion over five years plus an identical OpenAI pact that could push its AI GPU revenue toward $40 billion in fiscal 2026.

This dynamic highlights how inference workloads are projected to reach $255 billion by 2030 outpacing training in scale. Businesses that relied solely on CUDA now face rising licensing and power bills while early AMD adopters report faster ROI on real-time AI agents. Our analysis at L-Impact Solutions confirms the market rewards those who balance both ecosystems rather than betting on a single winner.

L-Impact Solutions Critique of Nvidia Dominance and AMD Momentum

Nvidia’s $216 billion revenue milestone underscores its unmatched CUDA ecosystem yet it exposes dangerous over-reliance risks that could stall enterprise AI adoption across industries. At L-Impact Solutions we view the near 90% training market share as a red flag because any disruption in Taiwan-based supply or export rules triggers immediate cost spikes of 20 to 30%. The pain point hits hardest for mid-sized firms facing GPU shortages that delay projects by months while hyperscalers hoard capacity.

AMD’s ROCm progress and Meta-OpenAI deals close the software gap but still lag CUDA’s maturity creating integration headaches that slow deployment by up to 40% in mixed environments. Risks include AMD’s current single-digit overall AI share leaving it vulnerable if inference growth slows or custom silicon from hyperscalers accelerates. We also flag energy consumption as a critical gap since both vendors’ high-power racks push data center electricity bills beyond sustainable levels without efficiency mandates.

Valuation pressure on Nvidia at $4.3 trillion means any missed quarterly guidance could spark a 15 to 25% correction that ripples through supplier stocks including AMD. The AI supercycle hides broader gaps such as limited open standards that lock enterprises into proprietary stacks and geopolitical vulnerabilities tied to concentrated manufacturing. L-Impact Solutions warns that ignoring these risks turns today’s boom into tomorrow’s costly bottleneck for unprepared organizations.

Data-Backed Market Validation: Training vs Inference Economics Shift

Independent industry estimates indicate the AI infrastructure market is entering a bifurcation phase, where training remains capital-intensive but increasingly front-loaded, while inference scales with sustained enterprise demand. Nvidia continues to dominate high-margin training clusters, yet inference workloads—driven by real-time AI agents and copilots—are expanding at a faster compound rate, with credible projections placing inference spend at 1.5–2x training by 2030. This structural shift validates why enterprises are reassessing GPU allocation strategies beyond a single-vendor dependency.

From a cost architecture perspective, training clusters typically operate at low utilization post-model deployment, whereas inference systems sustain continuous, latency-sensitive workloads, making cost-per-token and power efficiency the primary optimization levers. Here, alternative stacks leveraging AMD GPUs and emerging accelerators demonstrate measurable gains in performance-per-watt and total cost of ownership (TCO) in controlled deployments. Early adopters report 15–30% inference cost reductions when selectively offloading non-critical workloads from CUDA-bound environments.

Crucially, hyperscaler disclosures and supply chain signals reinforce this transition: capital expenditure is increasingly directed toward heterogeneous compute architectures, not monolithic GPU clusters. Enterprises aligning with this shift—by ring-fencing training workloads while modularizing inference layers—are better positioned to absorb pricing volatility, optimize energy consumption, and unlock incremental ROI. This data-backed reallocation strategy elevates infrastructure resilience from a tactical adjustment to a board-level capital allocation priority.

Solutions to Overcome AI Infrastructure Challenges

Immediate mitigation of vendor lock-in can be achieved by establishing a hybrid GPU strategy, incorporating Nvidia CUDA for training and AMD ROCm for inference workloads across enterprise data centers. The initial step involves auditing current AI projects and allocating 30% of new capacity to AMD Instinct chips, which have recently demonstrated competitive performance in agentic AI, validated by recent endorsements from Meta and OpenAI. This strategic shift is projected to reduce per-token inference costs by 15% to 25%, while retaining the Nvidia ecosystem for mission-critical training operations.

Investment in software portability tools is warranted to facilitate the translation of CUDA code to ROCm within weeks, rather than months, enabling seamless workload migration as market shares evolve toward AMD’s projected 18% by late 2026. Partnerships with certified integrators are recommended to optimize rack configurations, potentially utilizing Nvidia’s Groq LPUs for decode phases in conjunction with AMD GPUs for cost-sensitive inference, achieving up to 35 times greater throughput per watt. Real-time metrics must be tracked via unified dashboards to capture all efficiency gains without requiring modifications to core applications.

Financial flexibility is enhanced through the negotiation of multi-year volume agreements, structured similarly to the six-gigawatt Meta deal, securing pricing discounts of 10% to 20% and performance-based warrants. Exploration of cloud bursting options from providers already utilizing mixed fleets is advised to test AMD at scale prior to full on-premise commitment. At L-Impact Solutions, we model these scenarios for clients, demonstrating an anticipated Return on Investment (ROI) improvement of 40% within 18 months through balanced sourcing.

Budgetary provisioning should include a 10% allocation of AI capital expenditure (capex) for custom silicon pilots and open-source inference frameworks, thereby reducing long-term dependence on any single vendor. Piloting energy-efficient configurations, such as liquid cooling paired with lower-power AMD MI450 variants, can decrease electricity expenditure by 30% while simultaneously meeting sustainability objectives. These practical measures transform the current Nvidia-AMD market dynamic into a competitive advantage rather than an operational constraint.

Prevention Steps for Future AI Supply and Innovation Risks

You prevent supply disruptions by locking in diversified supplier contracts today that guarantee minimum allocations from both Nvidia and AMD through 2028 regardless of market shifts. Build internal ROCm expertise teams now so your engineers can maintain 95% code portability and avoid the integration delays that plague late adopters. Regular quarterly stress tests of your AI pipelines against potential 20% GPU price hikes keep your operations resilient.

To mitigate against energy and regulatory volatility, mandate power-efficiency Key Performance Indicators (KPIs) in every new deployment, targeting under 5 kilowatts per petaflop by 2027. Invest in modular rack designs that facilitate seamless component exchange between current GPU generations and emerging LPU architectures, thereby enabling adaptation without requiring complete rip-and-replace cycles. Monitor AI accelerator market share reports on a monthly basis to proactively adjust allocations before any single vendor’s dominance drops below a 70% threshold.

To alleviate valuation and market bubble risks, allocate 15% of the AI budget toward scenario planning, modeling both 50% growth and flatline projections for the subsequent years. Foster participation in industry consortia focused on open standards to accelerate software maturity across diverse ecosystems and progressively reduce proprietary lock-in. These proactive strategies are essential to ensure the organization maintains a leading position ahead of the next inflection point within the over $200 billion AI chip market.

To secure talent pipelines, implement cross-training programs for staff encompassing CUDA, ROCm, and LPU architectures, cultivating a versatile workforce prepared for a heterogeneous vendor environment. Schedule annual third-party audits of the infrastructure diversification score to ensure the maintenance of at least 35% non-Nvidia capacity, thereby avoiding single points of failure. Clients of L-Impact Solutions who implement these measures report a 25% reduction in volatility concerning AI project timelines and associated costs.

L-Impact Solutions Key Takeaways

The $216 billion Nvidia revenue story proves the AI supercycle is real but its priced-in leadership demands you act now to capture AMD’s fresh growth potential in inference. At L-Impact Solutions we strongly believe diversified strategies deliver superior returns by cutting costs 20% and boosting deployment speed while guarding against supply and valuation shocks. Ignore the gaps at your peril because the next wave of agentic AI will reward flexible leaders not locked-in followers.

You hold the power to turn today’s market split into lasting competitive advantage by embracing both ecosystems today. Our analysis shows companies that balance Nvidia’s training muscle with AMD’s inference edge achieve 40% higher ROI within two years. The data is clear and the window is open so move decisively to secure your place in the AI future.

Reference – AMD vs. Nvidia: The AI Supercycle Is Big Enough for Both. Here’s the Better Buy.